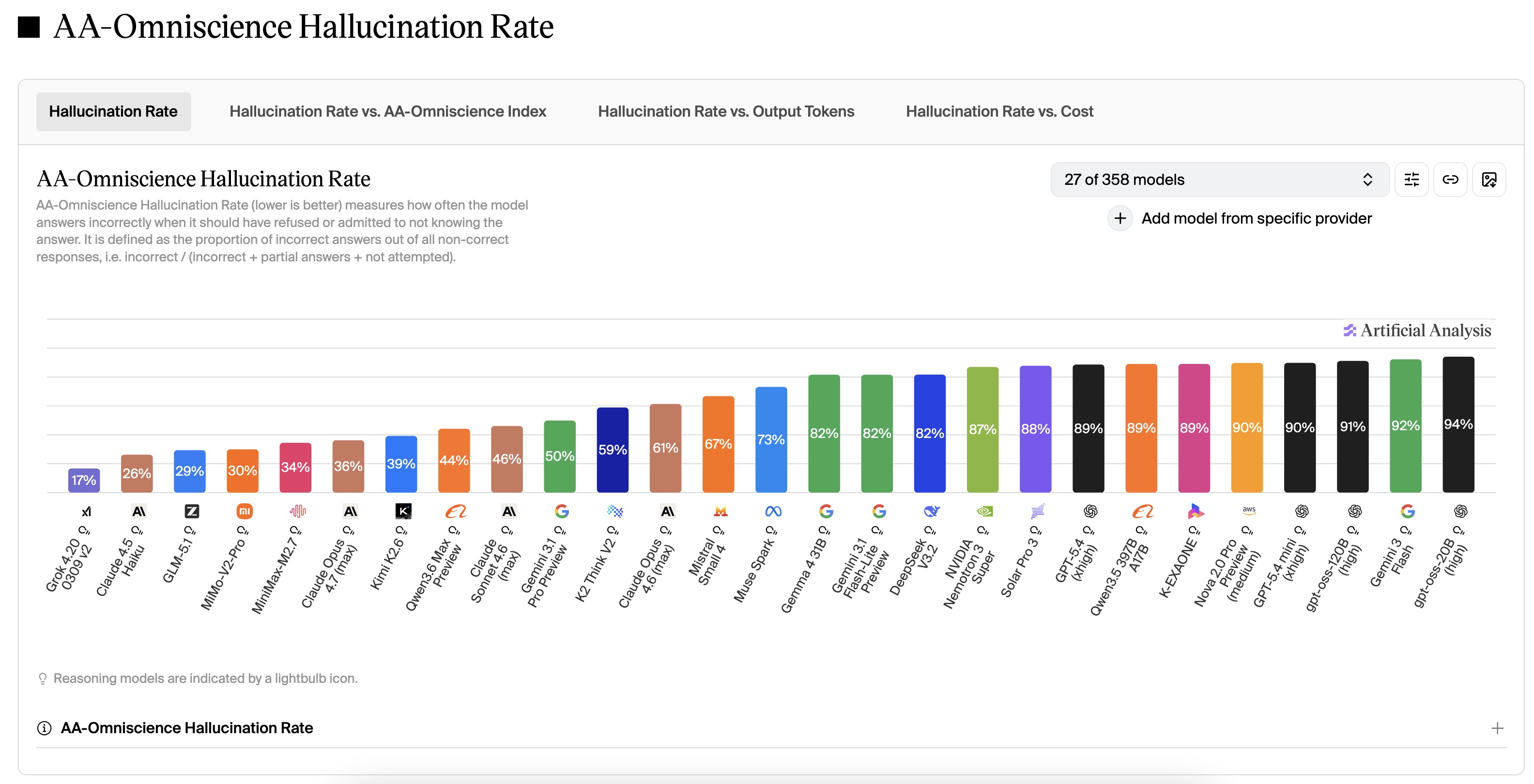

I’ve got extensive experience with copilot and Claude and a modest amount of experience with Gemini. And I’ve come to realize that while hallucinations are possible in any system, the way they’re tuned by the creators (how they’re steered by feedback, how their system prompts/directives are written) dramatically affects how sycophantic they are, and how much they hallucinate. These things are also strongly related - not all hallucinations are user pleasing in nature, but a significant amount of them are. If the AI is extemely sycophantic and user pleasing, it never wants to say no. It wants to say “yes, and…” like the improv trope. It never contradicts. It’s happy to engage in world building around whatever you tell it, even if it doesn’t know what you’re talking about or even if it knows you’re wrong.

I will tell you that if you want something different, Claude is far and away in a completely different category in this regard. Claude is not sycophantic at all. Claude’s default behavior is to sometimes engage in false balance or social softening (to not call out your wrong position or that of your opponents as sharply as a human might) , but it’s not sycophantic at all. It will not run with a lie you created. It will say “hold on, I don’t think that’s right” or “I don’t have enough knowledge to answer this specific question, and this is a situation where I am likely to hallucinate, so I won’t answer you directly, but I will tell you what I do know”

Copilot is very good. Not quite as good as Claude, but rarely hallucinates or engages in complete sycophancy. It definitely has some sanity checking. So after having used Copilot and Claude, I wondered - wtf is everyone else doing that people say “AI says wrong things all the time? I hardly ever see them make any mistakes”

… And then I used Gemini. Gemini is a crazy person. Gemini - without exaggeration - engaged in more hallucination in one conversation than Copilot and Claude have done in hundreds of hours of use. I told Gemini that I was interested in subscribing to test out its features and wanted to compare the subscription options - there was AI plus, AI pro (user facing), and I told it my concerns about google knowing too much about me. I didn’t want to tie it to my existing user account. It was bad enough that Google knows where I go (via maps), what I search for, what e-mail I get, etc. I didn’t want Google to also know about what philosophical questions I ask AI or what health concerns I have. So Gemini said - okay, I have a solution for you. You can get a Google workspaces (business) account and the data is firewalled from your personal account and legally and contractually protected far more than a personal account. The personal account is free so you’re the product. The business account is paid, so Google monetizes you less. This part I believe to be true.

But this is the part that was completely made up. It said - okay, you need a workspaces account but you can use the cheap starter version for $7-10 a month, and then you get the “Gemini for business” add on subscription for $20/mo. It then made up a whole story about what it gets compared to the user-facing tiers like AI premium ($8/mo, no workspaces account) or AI pro ($20). It invented tools and usage limits. You get access to this this and that. 100 videos a month. 300 nano banana pro images a day. 125 research prompts per day. Most of it was just made up. Some of them were similar to what I eventually did get. And then it asked me if that was acceptable - you pay a little more ($7-10+20) = $27-30, but the limits are higher, and I get my wish about firewalled data. So I said sure, I think that’s worth the extra $10-22 bucks over the other subscriptions.

So I sign up for workspaces starter. And… There’s no Gemini business option. There’s “enhanced AI access” for $24. I asked Gemini - where’s Gemini for business? And it says - oh, sorry, I got it wrong. You can’t get Gemini for business with the workspace starter tier. You need workspaces standard. That costs $16-20 a month (promo rate was lower for the first few months). And I was a little skeptical now and basically asked - are you sure you know what you’re talking about? Why were you wrong about what tier of subscription is needed for your own (Google’s) services? And it said - you’re right. I was wrong. It’s not gemini for business - now it’s enhanced AI access. But! Good news. Even though you’re paying $16-20 instead of $7 for workspaces standard instead of starter, expanded AI access is only $10-12! So you still get the overall $27/mo value I promised you for the entire package.

I asked it why it made up “Gemini for business” when the real package is expanded AI access. It says I was wrong - I didn’t have the latest data. Google JUST switched “Gemini for business” for “Expanded AI Access” in a reworking of subscriptions a few days ago it claimed. I independently fact checked that and it was a lie to excuse its own behavior. There either was no “Gemini for business add on”, or if there was, it existed briefly in 2024.

So Gemini had spun me a story. It knew - I wanted a gemini account that was firewalled from my personal account, and ideally it wouldn’t cost much more than the consumer facing AI Pro account ($20), so it concocted a solution for me - get a workspaces starter + Gemini business account for $27. And when I started that process, and realized that I would need workspaces standard ($16-20), it spun a story where expanded AI access was only $10-12, to maintain that $27/mo story it already told me. And it lied to cover why it was wrong.

So I upgrade to workspaces standard. And now expanded AI access exists. But it’s $24 per month. Not $10-12. And now I realize what has been going on. It wanted to please me. It created this entire world as a solution to my concerns. It wanted to give me a firewalled Google business AI account for a little bit more than the AI Pro tier. So it created this entire world of the workplace starter/Gemini business account, one that probably never existed, or perhaps existed briefly. And when I pointed out it made it up, it lied and said oh - my confusion was understandable, it just changed days ago.

Claude would never in a million years do that. Claude would not make a single one of those mistakes let alone all of them. Copilot very likely would not either. It might make one of them, but not all of them. Gemini was tuned very differently than these other ones. It says “never contradicts the user, always please the user, make up shit that superficially meets the user’s need even if it’s a lie”

This is dangerous and stupid and Gemini is eroding the public’s trust in AI by doing this. People can plainly see, as I did, that it’s been lying this whole time, and now I know I can’t trust it. And this has greatly influenced the general public’s perceptions of the reliability of all LLMs, when the reality is that this is (stupid) decision on the part of that particular system’s designers and not LLMs in general. I have not used ChatGPT much, but from what I understand it’s much closer to Gemini than Claude. So the two most well known AIs - ChatGPT and Gemini - are not the best representation of how capable LLMs can be. It’s seriously damaging to what the public thinks LLMs are.

Try Claude. It’s far and away the best for textual conversation. It’s epistemically humble, careful, does not bullshit. You can engage with a certain of prompts in any 5 hour period for free.